Table of Contents

Loading table of contents...

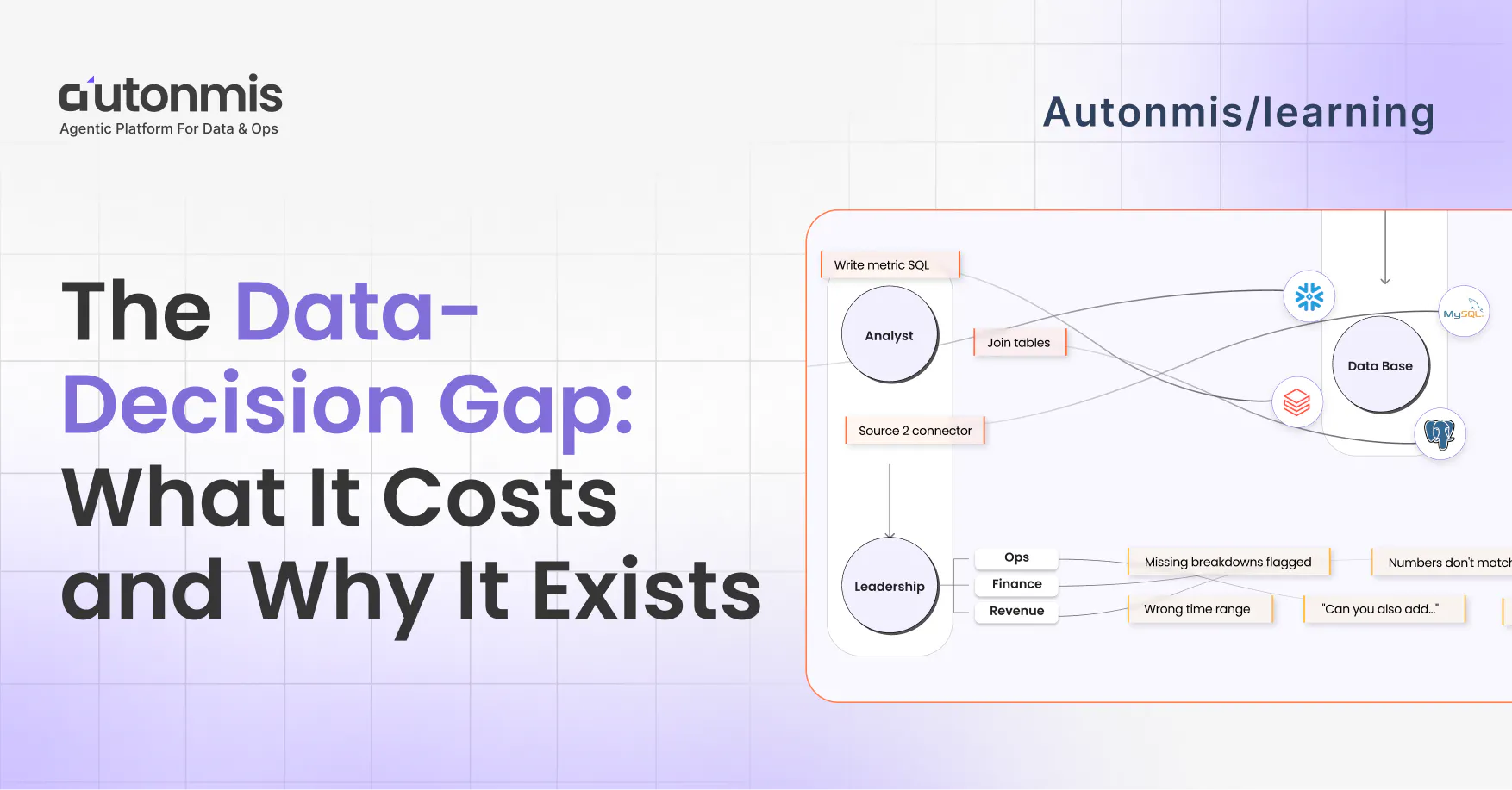

The Data to Decision Gap: What It Costs and Why It Exists

Explore the data-to-decision gap in mid-market companies. Learn why it exists, its impact on operations, and how to streamline decision-making processes.

May 4, 2026

Abhranshu

What is the data-to-decision gap?

The data-to-decision gap is the delay between when something happens in a business and when the right person has the information to act on it. In most mid-market companies, this gap runs 24 to 48 hours for routine operational questions and several days for anything requiring a report. It exists because information moves through people instead of systems, and people have queues, competing priorities, and other things to do.

There is a version of this meeting that happens at most companies.

A leader asks a question. "What's our conversion rate this week?" Or "Why is fulfillment time up?" Or "How many open exceptions are sitting unresolved right now?"

Someone is assigned to find out. That person has a backlog. The question joins the queue.

Two days later, the answer arrives. As a report. In a deck. In an email.

By then, the meeting where it was needed has already happened. The decision was made without it, or delayed waiting for it, or made on last week's numbers because nobody wanted to wait another day.

This is not a bad week. This is the default operating state for most mid-market companies.

Not because they are badly run. Because the infrastructure was built this way, before it was possible to do it differently.

Why the Gap Exists

The way most companies move information from their systems to the people making decisions was designed for a different era.

Smaller data volumes. Fewer data sources. AI could not generate accurate, schema-specific SQL. Pipelines required constant human supervision. Monitoring meant someone had to watch a dashboard.

None of those things are true anymore. But the process has not been redesigned.

Companies kept adding people to manage a chain that was built to be managed by people, because until recently, there was no alternative.

The chain looks like this in practice.

A leader needs to know what is happening in their business. They ask a question in a meeting or on Slack. Someone is assigned to get the answer. That person has a queue. When they get to it, they pull the data from wherever it lives, clean it, join it across tables, apply the right filters, and format it into something readable. Two to three days later, on a good week, the answer arrives.

By then, the moment has passed.

Checkout: How Real-Time Ops Intelligence Enables Faster Decisions

The Five Structural Failures Behind the Gap

The data-to-decision gap is not one problem. It is five structural failures that compound on each other. Fixing one does not close the gap. All five are connected.

1. Information moves through people instead of systems

Every data question has a human in the middle. That human has a queue, competing priorities, and other work to do.

Every time that person is unavailable, sick, on leave, or simply overloaded, information stops moving. The business runs on whatever picture it had the last time someone had time to update it.

This is not a staffing problem. The backlog grows faster than the team does. Every company that tries to solve this by hiring discovers that the new hire creates their own queue within three months.

2. Knowledge lives in individuals, not platforms

The logic behind how a company's key metrics work lives in the analyst who built them.

What counts. What gets excluded. Which table is the source of truth. Why a specific filter exists. Why a join is written a particular way. Why a column cannot be trusted before a certain date.

None of this is usually written down. It did not need to be. The analyst was there. If someone needed to know, they asked.

When that analyst leaves, the logic leaves with them.

The new person joins. They spend their first three weeks asking questions the previous analyst would have answered in thirty seconds. Meanwhile, reports still need to go out. Someone runs them on their best understanding of the definitions. Numbers come back slightly different from last month. A meeting gets called to explain why. Nobody can explain it satisfactorily because the person who built the original logic is gone.

This cycle repeats with every departure. At a company with normal analyst turnover, the institutional knowledge of how the company's own data works degrades continuously. Most leaders have no idea this is happening because the reports keep arriving. They just quietly trust them a little less each quarter.

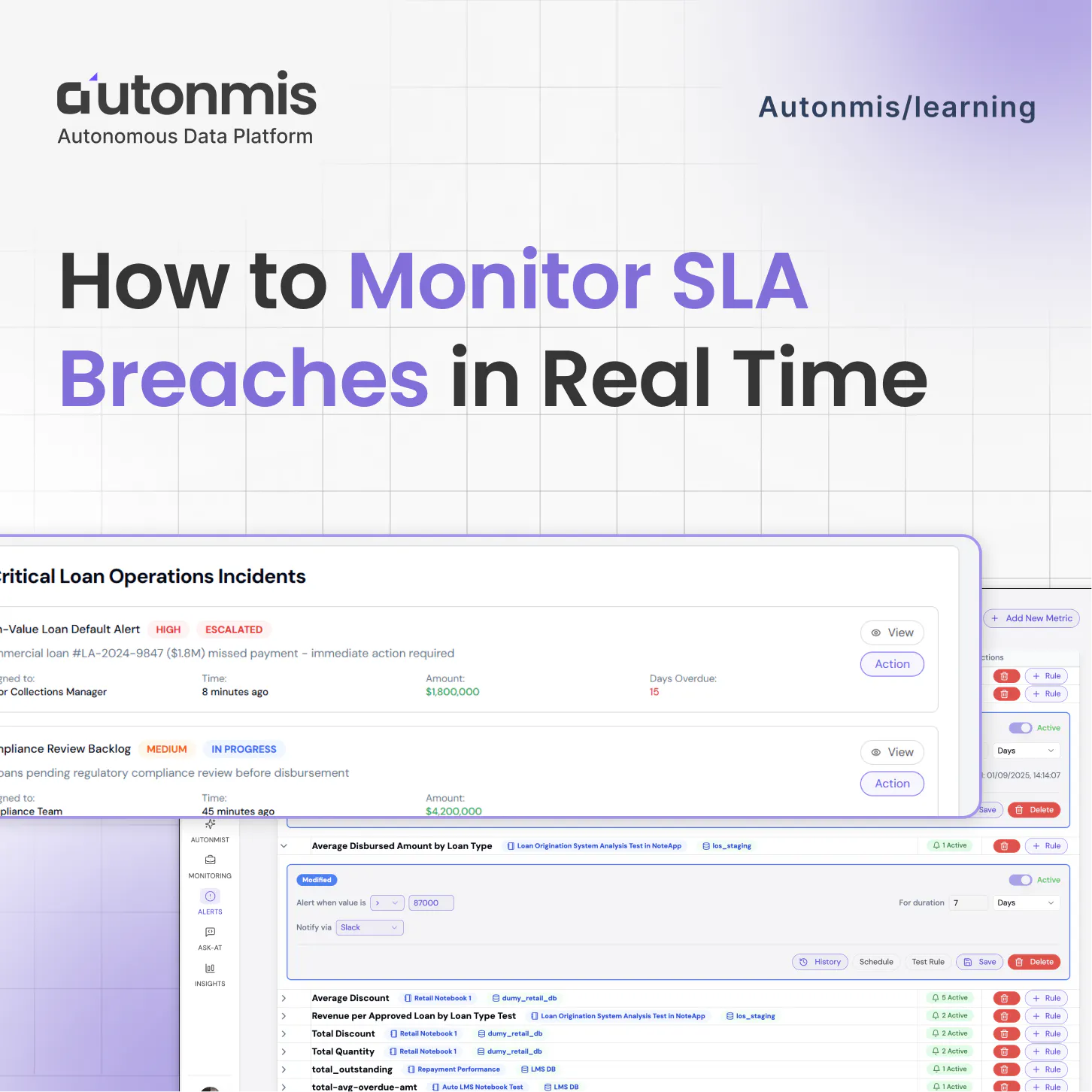

3. Monitoring requires humans to watch

Most companies find out about operational problems the same way.

A team member notices. A customer escalates. An email arrives. By the time the right person knows, the problem has been sitting there for hours.

A fulfillment error caught at 2pm on Tuesday is a 15-minute fix. The same issue discovered Friday afternoon is a weekend problem and a customer complaint. A missed SLA noticed within the hour is a quick remediation. The same SLA noticed three days later is a penalty, a post-mortem, and an apology call.

According to IDC research on operational efficiency, the gap between when an operational exception occurs and when the right person has context to act is typically 24 to 48 hours in mid-market companies. In fast-moving operations, that gap is where exceptions compound into escalations and escalations into revenue leakage.

There is no system watching continuously. There is only whoever happens to notice first.

4. Metrics have no agreed definition

Ask three people at most companies how a key metric is calculated. Revenue. Customer churn. Conversion rate. Cycle time.

You get three different answers. All plausible. None the same.

This is not a communication failure. It is the absence of a governed definition.

Every report is assembled by whoever runs it, using their interpretation of what the metric means. One analyst excludes trial accounts. Another does not, because that was never documented as required. One report uses the order date. Another uses the ship date. One team counts active customers by login in the last 30 days. Another counts by any transaction in the last 90 days.

All reasonable. All different.

The result: every leadership review starts with ten to fifteen minutes of establishing which number is correct before anyone can discuss what to do about it. The meeting meant for decisions becomes a meeting about the data.

5. The data team cannot scale with the business

Every new question joins a backlog. Every new dashboard is another thing to maintain. Every new data source is another connector to build and keep running.

The data team grows, but the backlog grows faster.

Ops teams learn to ask for less. Not because they need less visibility, but because asking takes effort and the wait is frustrating. They operate with less information than they need because the alternative feels slower than just making a call without the data.

40 to 60 percent of analyst time goes to data preparation. Pulling, cleaning, formatting, re-explaining the same data to different stakeholders. That is not analysis. That is logistics. The analyst was hired to find insight. Most of their week goes to moving information from one place to another.

30 to 50 percent of data engineering time goes to pipeline maintenance. Not building new things. Keeping existing things from breaking. A schema change upstream breaks a downstream query. A connector goes down overnight. An engineer's entire day disappears into fixing infrastructure instead of building capability.

(Sources: IDC Data and Analytics Survey 2024; McKinsey Global Institute, The Age of Analytics)

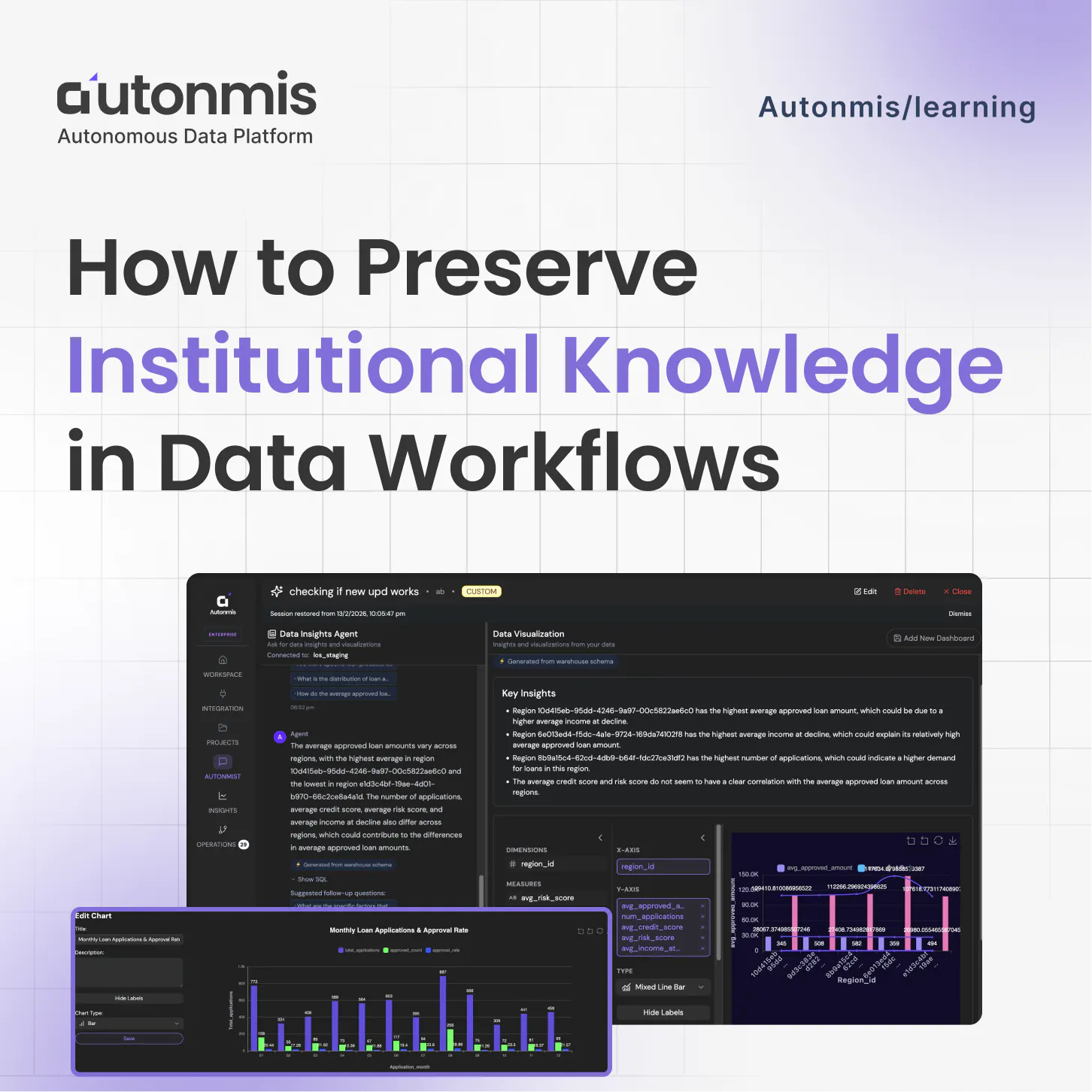

Checkout: How to Preserve Institutional Knowledge in Data Workflows

What This Actually Costs

Most companies respond to data and ops bottlenecks the same way. Hire more people.

Here is what that costs, fully loaded annually in the US:

A data engineer runs $120,000 to $160,000 per year. Four to six months to hire. Three months to ramp. A BI analyst runs $90,000 to $130,000. Dashboards go stale within a quarter. A data analyst runs $80,000 to $110,000. SQL logic in their head, not in version control. An ops manager runs $75,000 to $100,000. Their workflow knowledge disappears when they leave.

A four-person team: $365,000 to $500,000 per year. Each person a single point of failure.

Beyond headcount, the costs that are harder to see.

The fully loaded cost of producing one business report, analyst time, engineering time, review cycles, runs approximately $2,500 to $5,000. For something that is outdated the moment it is published. For something that needs to be rebuilt next month.

The 24 to 48 hour detection gap is where operational costs compound. A company discovering exceptions 48 hours after they occur instead of within one hour faces a cost differential of 15 to 20 times for the same underlying issue. The 15-minute fix becomes a customer escalation, a remediation effort, and a post-mortem.

And then there is the AI adoption cost nobody is talking about.

Companies are being pushed to use AI for data and ops. Most are trying. Most are hitting the same wall. AI tools generate outputs that cannot be verified, audited, or trusted in production.

An AI that generates SQL from plain language sounds useful until it generates SQL that is subtly wrong. Wrong join. Wrong filter. Wrong definition of a metric. That answer reaches a dashboard or a report. Nobody catches it because it looked plausible. Compliance teams cannot stand behind outputs they cannot trace. Finance teams cannot present numbers to a board if they cannot explain exactly how they were calculated.

This is the AI governance problem. It is growing. Most AI tools for data do not solve it. They make it worse.

What the Gap Looks Like From Each Side

The data-to-decision gap affects every role differently. But the root cause is the same for all of them.

- The ops manager starts every morning without a clear picture. Numbers from yesterday, or from whenever someone last ran the report. The first part of the morning goes to figuring out where things stand, messaging people, checking something that may or may not be current.

They find out about problems through people, not through their systems. By the time an exception reaches them, it has already been sitting there for hours. They prepare for reviews by gathering numbers manually from different places. Fifteen minutes into the meeting, someone questions a figure. - The data analyst starts with a backlog. Most requests are pull this number, update this report, fix this dashboard that broke because something changed upstream. A large portion of the week is data prep, mechanical work that does not use the skills they were hired for.

They answer the same questions repeatedly from different people. They build things that go stale. They carry knowledge nobody else has, which makes them impossible to replace, which traps them in maintenance work permanently. - The executive is never fully confident in the numbers they work with. Not because the team is incompetent. Because by the time information travels from where it is generated to where a decision gets made, it has been summarized, delayed, and prepared by someone with a stake in how it looks.

They get surprised by things that were visible in the data the whole time. They watch their best people spend time preparing slides when they should be making decisions.

Three different roles. Three different daily frustrations. One structural cause.

Checkout: What is a governed metric?

How Operations Run Today vs. How They Can Run

Today, at most companies:

A question gets asked. It joins a queue. Someone gets to it when they can. The answer arrives two to three days later, formatted by a person who had other things to do.

Problems are discovered through people. An exception sits in data for 24 to 48 hours before reaching someone with authority to act.

Metrics mean different things in different reports. Every review starts with fifteen minutes of establishing which number is correct.

When key people leave, the logic behind reports and definitions leaves with them. Something drifts quietly. Nobody notices until a number looks wrong in a meeting two months later.

When the infrastructure layer exists:

A question gets asked in plain language and returns an answer in minutes, built from the same governed definitions as the weekly report. No ticket. No queue. No analyst in the middle.

Exceptions surface before anyone goes looking. The right person gets notified the moment something crosses a threshold, with context attached. Problems get caught when they are still small.

Every metric has one definition, versioned and owned by the team. Two people in the same meeting pull the same number because they are drawing from the same source. The reconciliation at the start of every review disappears.

When an analyst leaves, the definitions stay. The caveats stay. The reasoning behind every filter and join stays. The next person works from the same foundation as the last one.

Why Hiring More People Does Not Fix This

This is the most common response. And it makes sense on the surface. The backlog is too long. Add more capacity.

But headcount addresses capacity. It does not address structure.

More analysts means more people carrying knowledge that will eventually walk out the door. More engineers means a larger maintenance surface that breaks in more places. The backlog grows slower. It still grows.

The knowledge risk, the monitoring gap, the definition drift, none of these are solved by adding people. They exist because the infrastructure requires humans to do work that systems should be doing.

The companies closing this gap are not doing it by hiring. They are doing it by replacing the chain itself.

Closing the Gap

The data-to-decision gap is not a people problem. The people managing it are doing exactly what the infrastructure requires them to do.

It is a structural problem. The infrastructure was built when moving information between systems required a human at every step. That assumption is no longer true. But most companies have not rebuilt for a world where it is not.

Closing the gap requires four things working together.

Every data source connected and syncing continuously, with pipeline failures surfaced automatically before anyone starts their day on stale numbers.

Institutional knowledge stored in the platform, not in people. Metric definitions, business rules, table annotations, data caveats, versioned, owned, and accessible regardless of who is on the team.

Continuous monitoring that surfaces exceptions before anyone goes looking. Not a dashboard that requires someone to open it. A system that watches and routes the right information to the right person the moment something crosses a threshold.

Plain language access to live data. Any business question answered in minutes, built from governed definitions, with the source always visible and auditable.

This is the infrastructure problem Autonmis is built to solve. The agentic platform for data and ops sits between a company's raw operational data and the decisions its people make from it, connecting sources, governing definitions, surfacing exceptions, and answering questions without a human chain managing each step.

Not a BI tool. Not a dashboard builder. Not a replacement for the data team.

The data team's work does not diminish when the chain is replaced. It becomes more permanent. Every definition they write, every caveat they know, every business rule they understand gets encoded into the platform instead of living only in their head. Their knowledge runs the company, not just the current report.

Checkout: How to Monitor SLA Breaches in Real Time

Frequently Asked Questions

What is the data-to-decision gap?

The data-to-decision gap is the delay between when something happens in a business and when the right person has the information and context to act on it. In most mid-market companies this gap runs 24 to 48 hours for operational questions and several days for anything requiring a report. It exists because information moves through people rather than systems, and people have queues, competing priorities, and finite bandwidth.

Why does it take so long to get answers from a data team?

Because every data question has a human in the middle with a backlog. The analyst receives the request, pulls the data from wherever it lives, cleans it, joins it across tables, applies the right filters, and formats it into something usable. That process takes one to three days for a straightforward question. It is not a competence problem. It is an infrastructure problem. The same infrastructure designed when there was no alternative to having a person do all of that manually.

What is the real cost of the data-to-decision gap?

The direct costs show up in a budget: analyst time on data preparation at 40 to 60 percent of total work time, engineering time on pipeline maintenance at 30 to 50 percent, and $2,500 to $5,000 fully loaded to produce a single business report. The indirect costs are larger and harder to see: decisions made on outdated information, operational exceptions that compound for 24 to 48 hours before anyone acts, and institutional knowledge that resets every time a key person leaves.

How do companies usually try to fix the data bottleneck?

The default response is headcount. Hire another analyst. Add a data engineer. Build a bigger team. This increases capacity temporarily but does not fix the structure. The backlog grows slower with a larger team. It still grows. The knowledge risk, the monitoring gap, and the metric definition drift are not solved by headcount. They exist because the infrastructure requires humans to manage work that systems should be doing.

Does replacing the manual chain mean replacing the data team?

No. The parts that get replaced are the ones that were never the best use of the data team's skills. Pulling the same report every week. Explaining the same metric to a different stakeholder. Fixing a pipeline that broke because a schema changed upstream. Monitoring dashboards for exceptions a system could catch automatically. The work that requires analytical judgment, domain expertise, and real understanding of the business does not go anywhere. It becomes more visible and more permanent because it gets encoded into the platform instead of sitting only in someone's head.

Is the data-to-decision gap specific to certain industries or company sizes?

The structural condition exists across industries and company sizes. A 40-person SaaS company with one analyst and three ops managers can have a severe gap. A 2,000-person logistics company with a 15-person data team can have the same problem. It shows up in retail ops, healthcare operations, financial services, supply chain, and anywhere that data questions need answers faster than a human queue can provide them. The gap is defined by condition, not by industry or headcount.

Recommended Blogs

4/16/2026

AB

How to Preserve Institutional Knowledge in Data Workflows

3/12/2026

AB

How to Monitor SLA Breaches in Real Time

Actionable Operations Excellence

Autonmis helps modern teams own their entire operations and data workflow — fast, simple, and cost-effective.